Postman for Testing API: When Collections Stop Scaling

Postman for testing API is the default for a lot of teams because it makes the first 80 percent easy: send requests, poke at responses, share a collection. The problem is what happens after the first suite “works”. Collections grow into a monolith, CI becomes a fragile Newman job, and every change turns into a debate about hidden state, scripts, and why the JSON export diff is unreadable.

This post is about that inflection point: when Postman collections stop scaling as an API test artifact, and what “scaling” looks like for experienced teams who want deterministic CI gates, Git review, and reliable request chaining.

Where Postman collections start hurting (even if the API tests “pass”)

Postman is great for interactive exploration and ad hoc validation. The scaling problems appear when you try to use the same artifact (a collection) as:

- A long-lived regression suite

- A PR-reviewable change set

- A deterministic CI gate

- A shared, multi-environment workflow contract

1) The artifact is not code-review friendly

A Postman collection is JSON. That is not inherently bad, but in practice:

- Diffs are noisy (reordered keys, regenerated IDs, UI-driven metadata changes).

- The meaning of a change is rarely obvious in a PR.

- Reviewers end up “trusting” the author instead of reviewing the test.

Postman’s own docs treat collections as a product artifact managed inside the app, with export/import as an integration mechanism, not as a primary code interface. (See Postman docs on collections.)

2) Hidden state becomes the real test framework

As collections scale, they often rely on:

- Collection-level variables and environment variables that are mutated over time

- Pre-request scripts and test scripts that implement implicit ordering

- Shared “setup” requests that must run before everything else

That works until you need to:

- Run tests in parallel

- Shard tests across CI workers

- Re-run a single workflow deterministically

- Make failures reproducible without “replaying” the whole collection

The result is usually a suite that is technically automated but operationally manual.

3) Newman is a runner, not a scaling model

Newman helps you execute Postman collections in CI. It does not solve the structural issues above. In many teams, the Newman job becomes a single, long-running step with:

- A large collection file checked into Git

- A pile of environment files

- Custom scripting glue around reporting and secrets

You can get it working, but the suite often remains hard to shard, hard to review, and easy to accidentally destabilize.

4) Collaboration still happens “in the UI”

When the canonical workflow lives in a GUI workspace, a few things tend to follow:

- Changes are discussed outside PRs

- The source of truth becomes “what’s in Postman right now”

- Environments drift because different people have different local setups

That is not a moral failing, it is a tooling mismatch. Git workflows assume the artifact is text-first, stable, and reviewable.

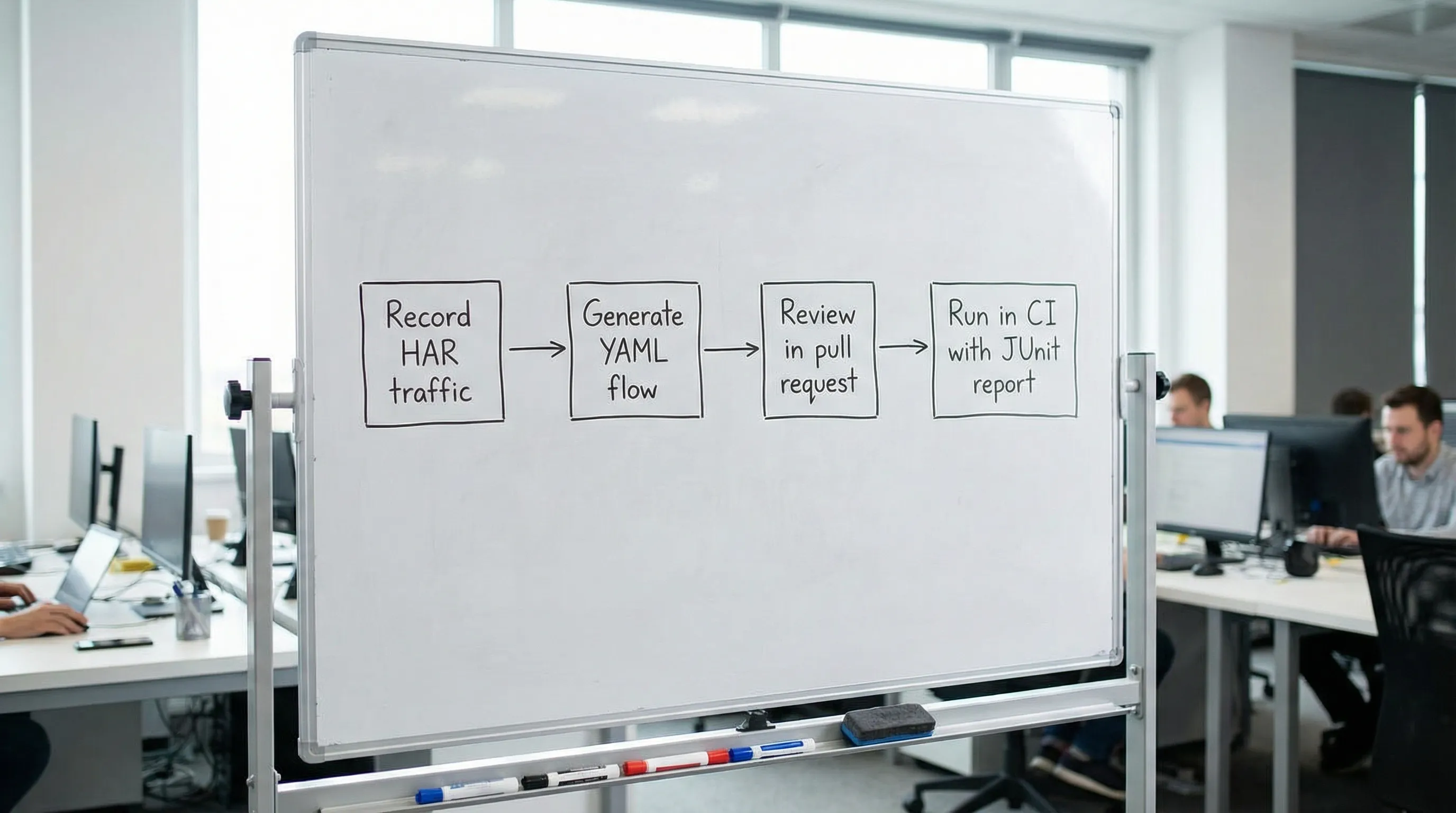

What scaling looks like for API test artifacts

For experienced teams, “scaling” API testing usually means:

- Git is the source of truth for test definitions

- Tests are reviewed like production code (small diffs, clear semantics)

- CI runs are deterministic (pinned runner, explicit inputs, predictable outputs)

- Workflows are composable and shardable (file-based suites, parallel-safe)

- Request chaining is explicit (data dependencies are visible, not “magic globals”)

This is where YAML-first flows tend to win, not because YAML is trendy, but because it is a practical format for humans and CI systems.

YAML-first workflows: explicit chaining you can review

A YAML flow makes dependencies and data extraction visible. Instead of “some script set a variable somewhere”, you see exactly:

- Which request produced the value

- How it was extracted

- Where it is used

- What assertions guard the workflow

Below is a representative multi-step flow (auth → create → read → delete), written as native YAML (the kind of file you can diff in a PR).

name: user-crud-smoke

vars:

base_url: ${BASE_URL}

email: ${TEST_EMAIL}

password: ${TEST_PASSWORD}

steps:

- name: login

request:

method: POST

url: "${base_url}/v1/auth/login"

headers:

content-type: application/json

body:

email: "${email}"

password: "${password}"

assert:

- status: 200

- jsonpath: "$.accessToken"

exists: true

extract:

access_token:

jsonpath: "$.accessToken"

- name: create-user

request:

method: POST

url: "${base_url}/v1/users"

headers:

authorization: "Bearer ${steps.login.access_token}"

content-type: application/json

body:

displayName: "ci-smoke-${RUN_ID}"

assert:

- status: 201

- jsonpath: "$.id"

exists: true

extract:

user_id:

jsonpath: "$.id"

- name: get-user

request:

method: GET

url: "${base_url}/v1/users/${steps.create-user.user_id}"

headers:

authorization: "Bearer ${steps.login.access_token}"

assert:

- status: 200

- jsonpath: "$.displayName"

equals: "ci-smoke-${RUN_ID}"

- name: delete-user

request:

method: DELETE

url: "${base_url}/v1/users/${steps.create-user.user_id}"

headers:

authorization: "Bearer ${steps.login.access_token}"

assert:

- status: 204

A few scaling-relevant details to notice:

- Inputs are explicit:

BASE_URL, credentials, andRUN_IDcome from the environment, not from a UI workspace. - Chaining is local and traceable:

${steps.create-user.user_id}is an explicit data dependency. - Assertions are part of the artifact: failures tell you which invariant broke, not just “the script failed”.

If you are testing real workflows (not single endpoints), this approach stays readable as the number of steps grows. For deeper patterns (polling, branching, eventual consistency), see the more workflow-focused guide: API Workflow Automation: Testing Multi-Step Business Logic.

The scaling breakpoint: collections vs flows

Collections tend to become “test programs” where control flow lives in scripts and shared state. YAML flows push you toward “test definitions” where:

- The runner owns execution mechanics

- The file owns the contract (requests, assertions, extraction, dependencies)

That distinction matters when you need to do normal software-engineering things.

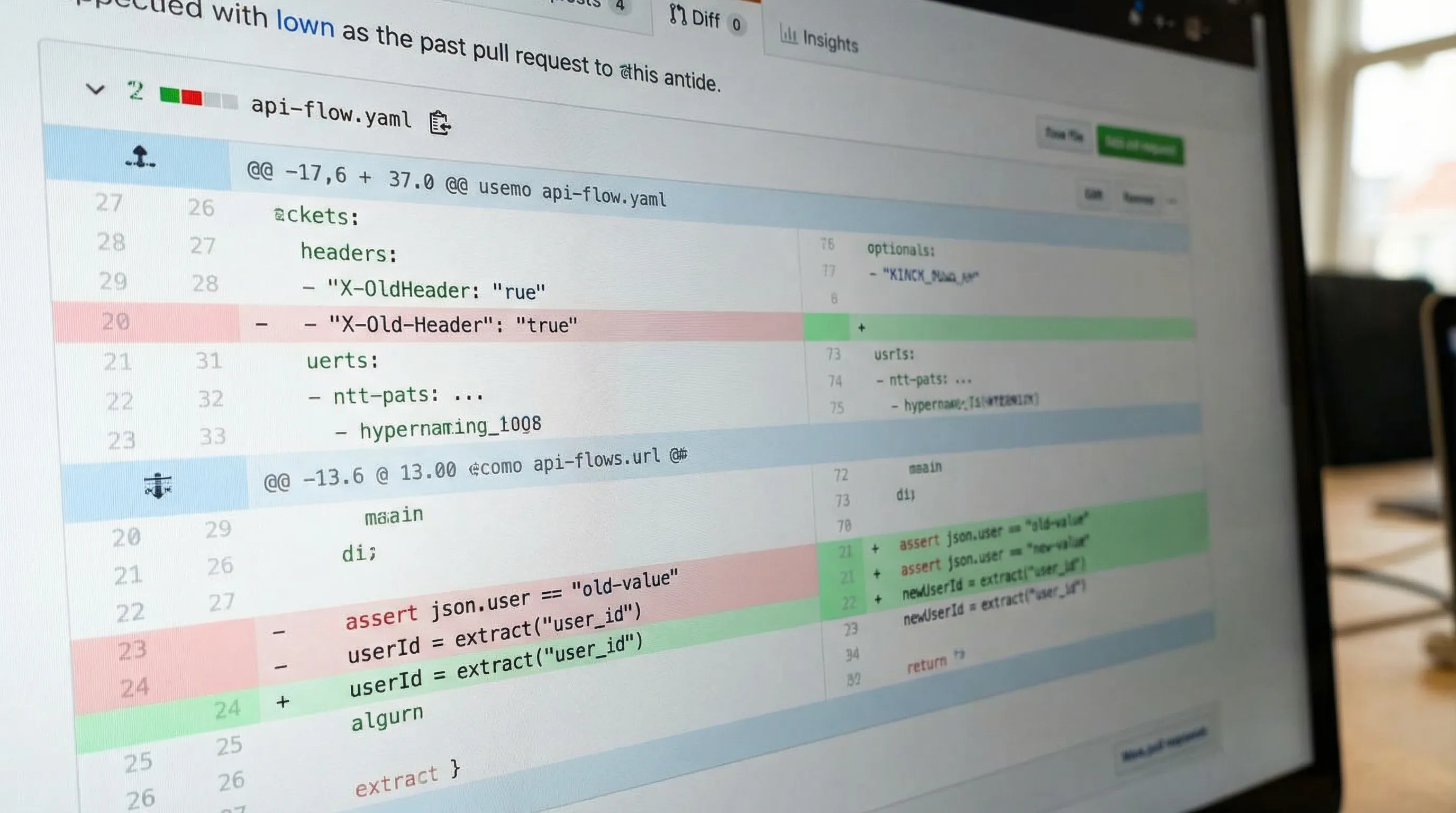

PR review: readable diffs vs regenerated exports

In a Git workflow, reviewers need to answer:

- What changed?

- Is the new behavior intentional?

- Is it deterministic?

YAML supports that because changes are usually localized to a few lines. Collections often produce diffs that are structurally correct but semantically opaque.

Parallelism and sharding: file boundaries that map to CI jobs

Scaling CI usually means parallel execution. That requires:

- Tests that do not share mutable global state

- Suites that can be split into independent units

A practical pattern is file-based sharding where each flow file is independently runnable. DevTools’ CI material goes deep on this (smoke vs regression, parallel jobs, JUnit output) in: API Testing in CI/CD: From Manual Clicks to Automated Pipelines.

Determinism: remove UI drift and runtime ambiguity

For CI gates, determinism is not a nice-to-have. It is the difference between:

- A failure that blocks a merge for a real regression

- A failure that gets retried until it “goes green”

YAML-first suites make it easier to enforce determinism via:

- Stable naming (step names become test IDs)

- Explicit dependencies

- Standardized environment contracts

- Reviewable assertion strategy

If your current suite flakes due to response volatility, timestamps, unordered arrays, or pagination, it is usually an assertion problem, not a runner problem. See: Deterministic API Assertions: Stop Flaky JSON Tests in CI.

Postman vs Newman vs Bruno vs native YAML (practical comparison)

Bruno deserves mention because it moves toward local files and Git. But the important question is: what is the canonical test definition format?

| Tooling approach | Primary test format | Reviewability in Git | CI ergonomics | Chaining model |

|---|---|---|---|---|

| Postman app | Workspace + exported collection JSON | Often noisy diffs, UI metadata | Indirect (usually via Newman) | Variables + scripts, often implicit |

| Newman | Runs Postman collection JSON | Same artifact limitations | Common, but scaling relies on conventions and glue | Same as collection (scripts/state) |

| Bruno | File-based (tool-specific format) | Better than collection JSON, still tool-specific | Possible, still depends on its ecosystem | Tool-defined primitives |

| DevTools | Native YAML flows | Designed for small, semantic diffs | CI-native runner, local-first | Explicit extraction + step references |

The takeaway is not “format wars”. The takeaway is that native YAML reduces the distance between what humans review and what CI executes.

A scalable repo shape (without inventing a whole framework)

You do not need a complicated structure, but you do need intentional boundaries:

api-tests/

flows/

smoke/

auth-smoke.yaml

user-crud-smoke.yaml

regression/

billing-happy-path.yaml

billing-refunds.yaml

env/

staging.env

prod.env

fixtures/

users.json

This layout supports:

- Fast PR gates (smoke)

- Slower post-merge or nightly runs (regression)

- Parallel execution (each file is a shard unit)

For conventions that keep diffs stable over time (sorted headers, stable key ordering, naming rules), see: YAML API Test File Structure: Conventions for Readable Git Diffs.

CI/CD integration: keep it boring

A good CI integration is one you do not think about. The minimum bar is:

- Install a pinned runner

- Run flows

- Emit machine-readable reports (JUnit/JSON)

- Upload artifacts for debugging

A minimal GitHub Actions shape looks like this (intentionally short, your org will add caching, sharding, and artifacts):

name: api-tests

on:

pull_request:

jobs:

smoke:

runs-on: ubuntu-24.04

steps:

- uses: actions/checkout@v4

- name: Run smoke flows

env:

BASE_URL: ${{ secrets.BASE_URL }}

TEST_EMAIL: ${{ secrets.TEST_EMAIL }}

TEST_PASSWORD: ${{ secrets.TEST_PASSWORD }}

RUN_ID: ${{ github.run_id }}

run: |

devtools run api-tests/flows/smoke --report-junit junit.xml

If you are currently using Newman as the CI runner, the practical replacement path is documented here: Newman alternative for CI: DevTools CLI.

When Postman is still the right tool

Even when collections stop scaling as a regression artifact, Postman can remain useful for:

- Exploratory debugging

- Sharing example requests with non-CI consumers

- Quick one-off reproductions

The shift is not “ban Postman”. The shift is: stop treating the collection as the source of truth for CI gates.

A pragmatic transition plan (without rewriting everything)

If you have a large Postman investment, you do not need a big-bang rewrite. The scalable approach is to migrate by workflow criticality:

- Pick 3 to 5 business-critical paths that must block merges.

- Rebuild those as deterministic, chained YAML flows.

- Wire them into PR CI as a smoke gate.

- Gradually move the rest of the suite in slices.

If you want a concrete, step-by-step migration process (export, sanitize, handle scripts, map variables, run in CI), use: Migrate from Postman to DevTools.

The point: make the test artifact match how your team ships code

Postman for testing API works well when humans are the runner. Once CI is the runner, the artifact has to behave like code:

- Text-first

- Diffable

- Deterministic

- Composable

If your collections are getting harder to review than the API changes they are meant to validate, that is your signal. Move the regression truth into Git-native YAML flows, keep chaining explicit, and let CI execute exactly what reviewers approved.